Technology

-

Sponsored by Cleverbridge

Software sprawl is becoming a margin problem for SaaS CFOs

The next phase of SaaS will reward companies that combine commercial ambition with operational discipline.

By Markus Scheuermann, CFO, Cleverbridge • June 1, 2026 -

Mid-market CFOs confront growing execution strain

During H1 2026 discussions hosted by the CFO Alliance, finance leaders describe rising pressure tied to AI implementation, healthcare inflation and operational resilience.

By Adam Zaki • May 29, 2026 -

Explore the Trendline➔

Explore the Trendline➔

Getty Images

Getty Images Trendline

TrendlineThe CFO Strategy for Artificial Intelligence

Artificial intelligence’s impact on the office of the CFO continues to evolve, and finance chiefs must be aware of the opportunities it will create for growth.

By CFO.com staff -

Uber CFO sees driverless tech progressing in ‘fits and starts’

After betting big on the future of autonomous vehicles for years, the ridesharing company now appears to be taking a more measured approach.

By Dan Niepow • May 28, 2026 -

Anthropic surpasses OpenAI with near-trillion dollar valuation

Anthropic’s new $965 billion valuation comes as Claude adoption accelerates across corporate finance.

By Adam Zaki • May 28, 2026 -

Claude pricing raises new budgeting questions for CFOs

As Anthropic’s model becomes more prevalent across finance, CFOs may have to pay closer attention to token-based billing and its impact on the growing cost of enterprise AI usage.

By Adam Zaki • May 28, 2026 -

Q&A

Thrive CFO on standing out in a ‘fragmented’ IT marketplace

Named to the top financial seat of IT managed services provider Thrive in January, Matt Kosovsky talks through the complexities of the tech sector today.

By Dan Niepow • May 28, 2026 -

Brex co-founder’s hiring demands expose AI-era labor tensions

As tech companies cut jobs for AI efficiency, a recent LinkedIn post from Henrique Dubugras highlights tensions between AI-native labor expectations and younger workers’ views on burnout.

By Adam Zaki • May 27, 2026 -

Sponsored by Billtrust

The signals your revenue line won’t show you: An early-warning system for credit and revenue risk

Four AR signals that move before your revenue does.

By David Zwick, CFO, Billtrust • May 26, 2026 -

Inside Claude’s rapid expansion across corporate finance

Big Four firms, Wall Street banks and finance teams are continuing to embed Anthropic’s AI platform into forecasting, reporting and day-to-day finance workflows.

By Adam Zaki • May 21, 2026 -

Digital transformation tops list of factors reshaping CFO role

Recent developments have “sharply accelerated” the pace of change for finance chiefs, a new report contends.

By David McCann • May 20, 2026 -

Only 3% of finance leaders are skeptical of future AI payoffs: survey

AI investment is rising as finance executives report faster returns and prepare for a more active M&A environment, even as companies continue expanding finance teams, a new survey suggests.

By Adam Zaki • May 19, 2026 -

Sponsored by Cleverbridge

Agentic commerce is coming. Most businesses aren’t ready.

As AI changes how software is bought, manual sales processes put margins, forecasting and revenue at risk.

By Richard Stevenson, CEO, Cleverbridge • May 18, 2026 -

Growing challenge of recognizing AI-generated content heightens risk

New research reveals 48% of employees incorrectly identify AI-generated workplace messages, with 74% confident in their wrong answers.

By David McCann • May 14, 2026 -

New surveys show AI gains are uneven across finance

New data shows gains in decision-making and FP&A, while other data points to ongoing accounting workflow inefficiencies.

By Adam Zaki • May 13, 2026 -

AI helps firms with efficiency, but most don’t trust it to drive growth

Overcoming today’s weighty business challenges demands a re-assessment of automation’s cutting edge, EY counsels.

By David McCann • May 11, 2026 -

Sponsored by Woodrow

AI in finance: When human-in-the-loop means humans doing the work

"Human-in-the-loop" shouldn't mean humans doing all the work.

By Sidharth Kakkar • May 11, 2026 -

7 ideas from Consensus that show crypto’s shift toward traditional finance

At the "Super Bowl of Blockchain" in Miami Beach this week, stablecoins took center stage as crypto hits a crossroads, potentially shifting how future CFOs will manage liquidity.

By Adam Zaki • May 8, 2026 -

Just 28% of finance pros see finance AI tools delivering measurable results

Larger finance departments view their return on artificial intelligence as even less impactful than smaller ones do.

By David McCann • April 29, 2026 -

Financial trade group creates stablecoin certificate for treasury

The Association for Financial Professionals has partnered with fintech Kyriba on a new stablecoin certificate course aimed at addressing a “critical gap facing finance and treasury leaders today.”

By Dan Niepow • April 28, 2026 -

Opinion

Why enterprise AI still isn’t delivering financial returns

Companies are pouring billions into artificial intelligence, yet sustained financial impact remains elusive.

By Daniel Schmeltz • April 28, 2026 -

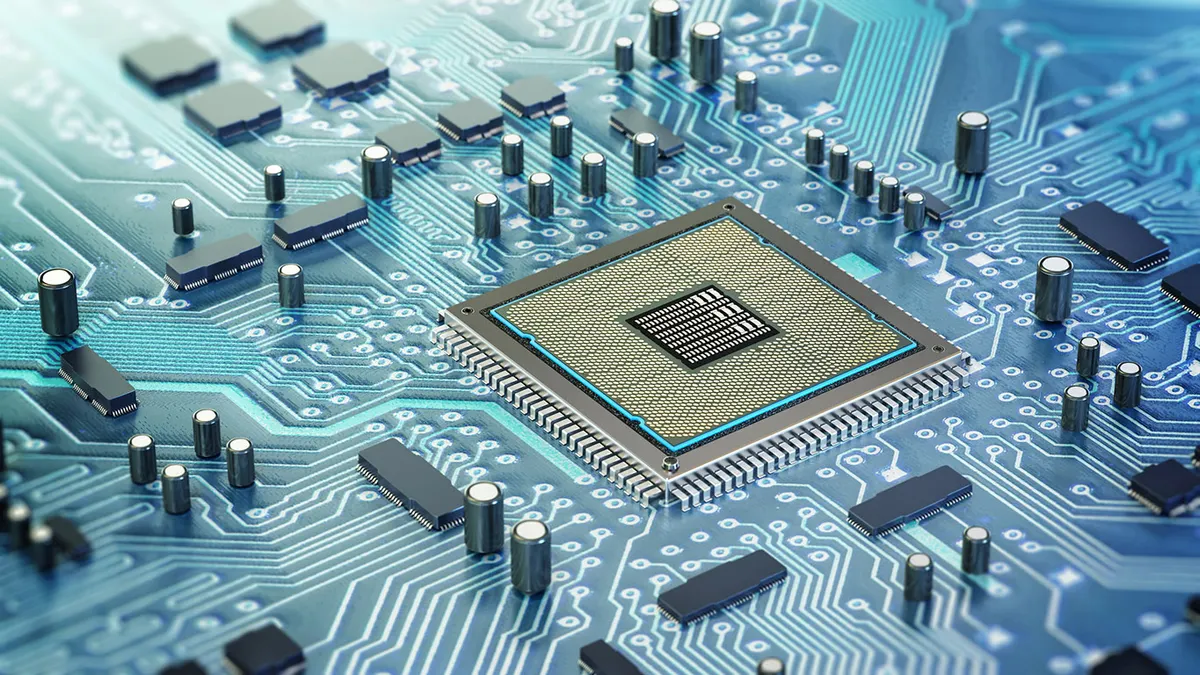

Suppliers to AI companies are big winners of spending surge

Many of the most successful players in the AI arena, such as CoreWeave, Nvidia and AMD, are making the chips and memory modules that make the technology possible.

By David McCann • April 27, 2026 -

pexels.com/Kindel Media

Sponsored by Charted (formerly SquareWorks Consulting)

Sponsored by Charted (formerly SquareWorks Consulting)What CFOs get wrong when evaluating AI-powered invoice processing in NetSuite

How to quantify AI success for invoice processing in NetSuite

By Bernardo Enciso • April 27, 2026 -

Finance functions ramp up internal AI budgets

The key to generating value from artificial intelligence projects within finance is scaling them to full production, according to a new Bain & Co. report.

By David McCann • April 23, 2026 -

Opinion

The best AI model still fails 1 in 5 accounting tasks

DualEntry’s CFO unveils insights from testing 19 AI models on 101 accounting tasks to evaluate their accuracy and efficiency. The results, he writes, “should concern CFOs buying into the hype.”

By Woosung Chun • April 22, 2026 -

Sponsored by Billtrust

Revenue matters. But cash velocity defines financial health.

Revenue looks strong - but slowing payments tell a riskier story. Cash velocity is what matters.

By David Zwick, CFO, Billtrust • April 20, 2026