As employers continue experimenting with new forms of technology in hiring and firing decisions, workers may, at times, find themselves at the mercy of algorithms.

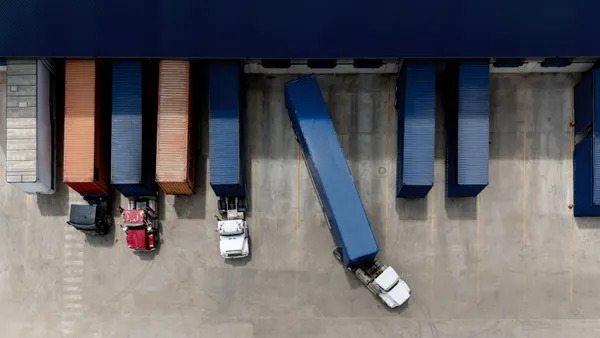

That’s because, experts say, there are currently scant legal protections surrounding the use of artificial intelligence and other new tech tools in making such choices. Oracle’s decision to lay off a likely sizable, though still publicly unknown, portion of its workforce allegedly by algorithm is just the latest iteration of a long-running trend.

Several media outlets reported that the tech giant is laying off thousands of workers, with some putting the figure as high as 30,000. The company itself has not publicly confirmed either the depth or even veracity of the alleged job cuts, though several former employees have taken to Reddit, LinkedIn and other social media platforms to share their accounts of being let go.

One LinkedIn post from a longtime Oracle employee suggested that Oracle used an algorithm to target “mid-level managers,” especially those with “outstanding stock options.” Though definitions vary, algorithms in this context generally describe computer processes based on strict rules.

The former employee later edited the post to pare back the claim and said she did not know for sure it was true, but media outlets had already picked it up.

One article by Toronto-based finance news website Moneywise pointed out a seeming incongruity: Hilary Maxson, Oracle’s newly appointed finance chief, joined the company with $26 million in stock options at a time when thousands of lower-level workers were losing their jobs.

Oracle didn’t respond to emailed questions about whether it used an algorithm to make the determinations.

Regardless, it is true that employers have been deploying algorithms in hiring and firing decisions for a while now. And it’s worth noting that finance and human resources teams, for now, still have a great deal of legal leeway in using technology for such decisions.

“The law has not fully caught up to where we are from a technological standpoint,” said Lisa Koblin, a partner in the labor and employment practice group at law firm Saul Ewing, in an interview.

But, she noted, several state and local governments are attempting to put guardrails in place. She pointed to New York City, which has passed local legislation that prohibits employers from using an “automated employment decision tool … unless they ensure a bias audit was done and provide required notices,” per the law.

Colorado’s sweeping legislation on artificial intelligence, which is set to go into effect in June, also requires companies to notify current and prospective employees if they use the technology for a range of decisions, including recruitment, hiring and discharge. Illinois has passed similar legislation to that effect.

“We’ve seen a trend throughout the country of bills and legislation popping up to address AI,” Koblin said.

Koblin pointed out that there is still a range of protections in existing employment law. Employers are forbidden from making decisions about hiring and firing based on protected categories, for instance. They also can’t make such decisions in retaliation for employees taking a leave of absence.

As for the use of AI, Koblin noted that many organizations are using such tools to evaluate employees’ performance, but she suggested they do so with care.

“Employers need to be cautious when looking at data that appears to use objective criteria,” she said.

But employers do have leeway when it comes to determining which employees to cut from a cost standpoint, too. “Certainly, you can consider overhead and cost in your decisions,” Koblin said.

Rita McGrath, academic director in executive education at Columbia Business School, said employers have been deploying many algorithms in hiring decisions over the years, and there are “pluses and minuses” to such practices.

She described the trend as “almost like a digital arms race” between employers and job candidates, with each side using more and more layers of automation. She likened it to the college application process, where both prospective students use automation to send out mass applications and universities use automation to cull applicants. McGrath said technology has “completely weaponized” that process.

And though there have been concerns about biases baked into automated platforms in the context of employment decisions, she points out that there are instances where such tools could actually level the playing field. “An algorithm doesn’t care who you had cocktails with last week,” McGrath said. “It could open the doors for underrepresented groups.”

Still, if Oracle did indeed deploy such a tool to determine which employees to cut based on stock options, McGrath said she would “not characterize that as a constructive use of algorithms.”